Having said that - generating too many conditions might also be detrimental to the performance of the search so it _might_ (but doesn't have to) be better to consider other ways to filter those events.

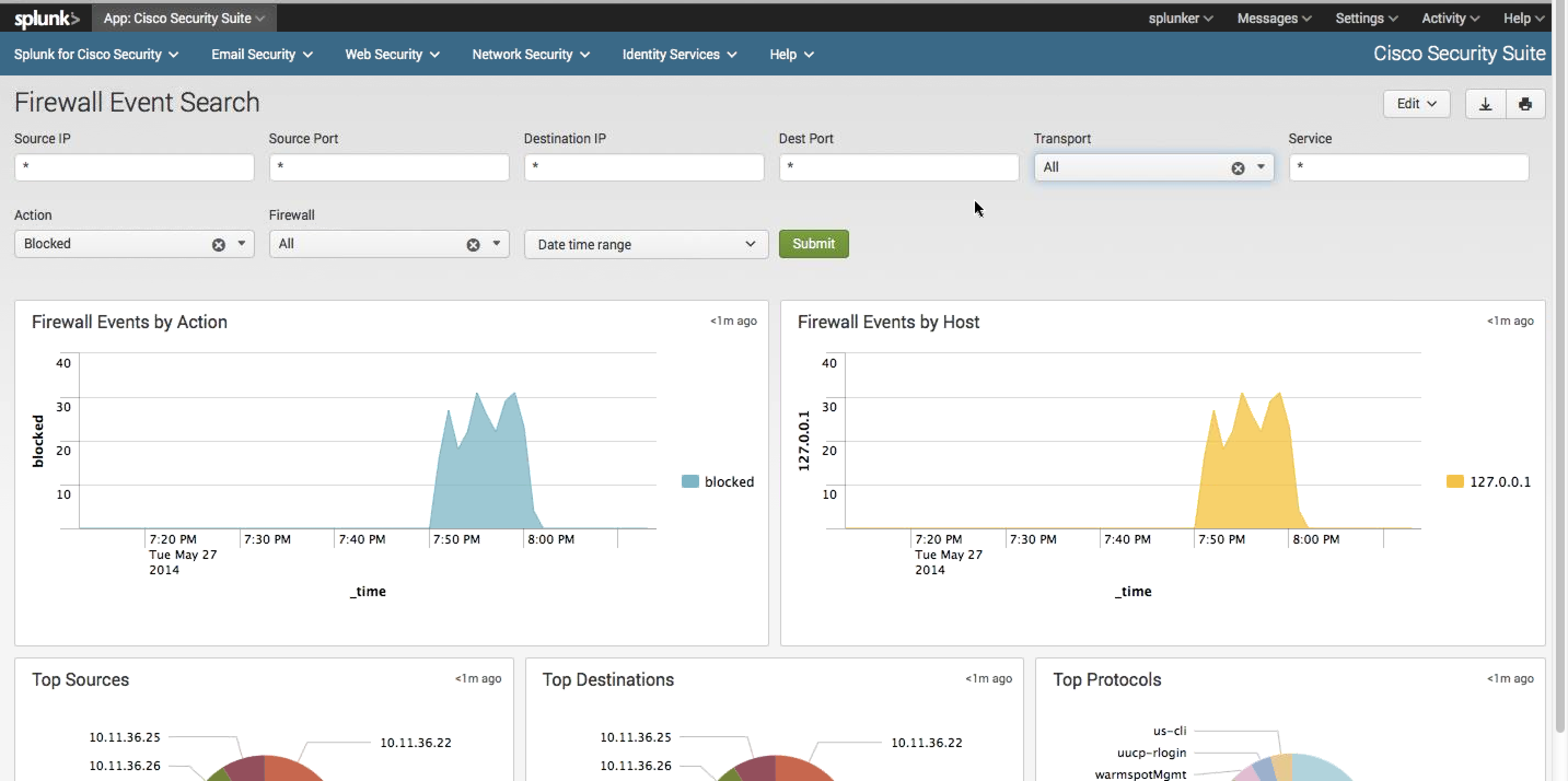

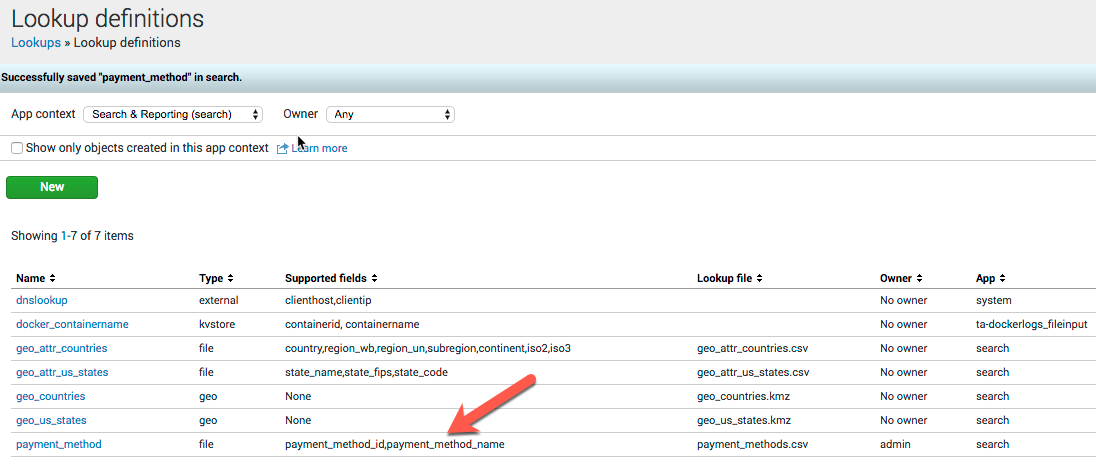

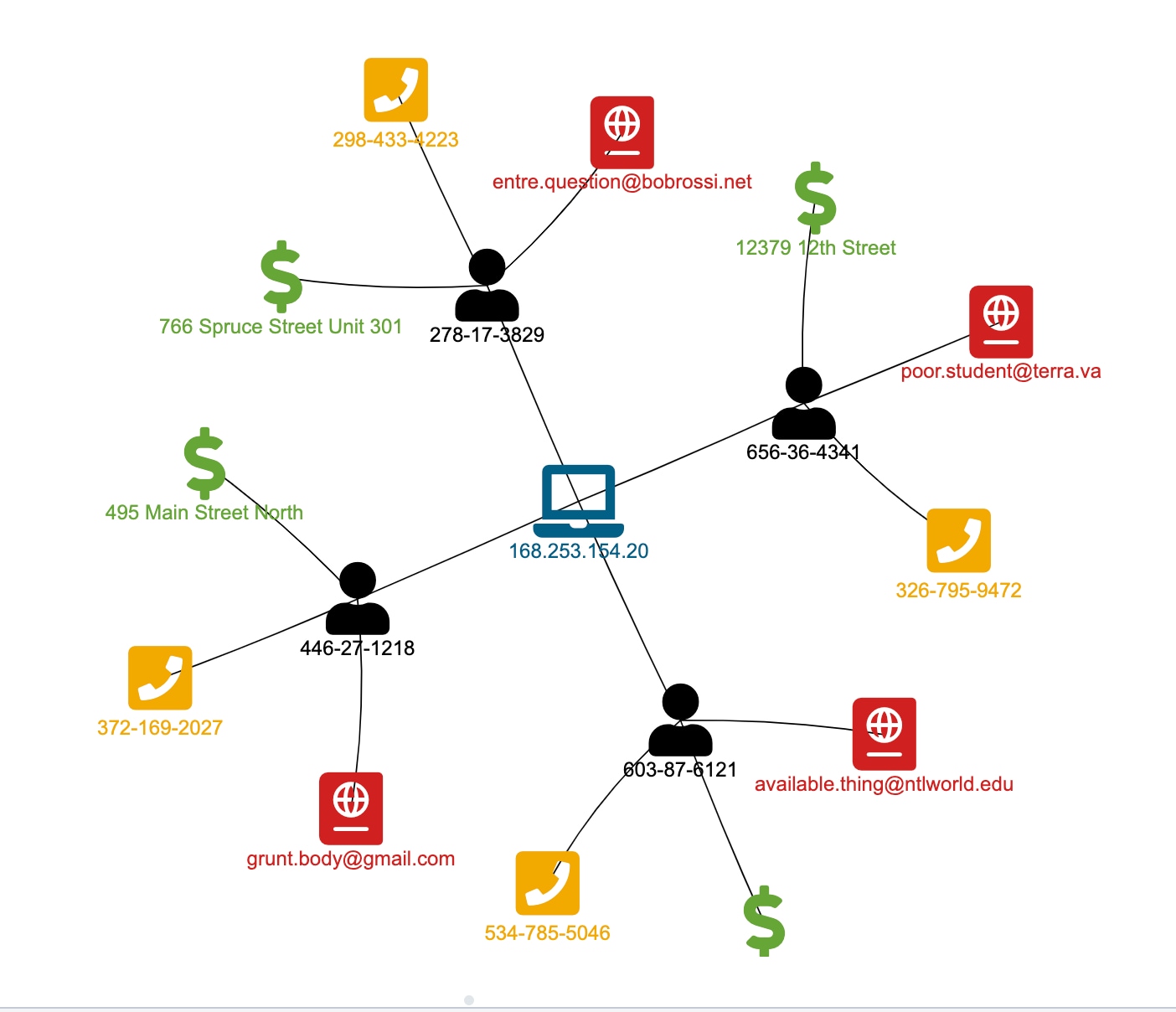

So you can just insert your subsearch into the main search - don't use the "search | search " construction - Splunk might be able to optimize it out anyway but generally what you're asking it to do is to search for all events and then filter them out (which might be much less efficient than selecting just a subset from the indexes). With that being said, is the any way to search a lookup table and. Dont read anything into the filenames or fieldnames this was simply what was handy to me. csvs events all have TestField0, the 1.csvs files all are 1, and so on. I just researched and found that inputlookup returns a Boolean response, making it impossible to return the matched term. I started out with a goal of appending 5 CSV files with 1M events each the non-numbered. If you make your subsearch so that it returns three fields - user, earliest, latest - your subsearch will get rendered to: (user="user1" earliest=earliest1 latest=latest1) OR (user="user2" earliest=earliest2 latest=latest2). All- I am new to Splunk and trying to figure out how to return a matched term from a CSV table with inputlookup. So by using clever renames you can reformat your search to include earliest/latest condition (probably using some evals in the middle to render proper timestamps from your lookup fields). Will return a set of results with just user field which in turn will get render into user="user1" OR user="user2" OR user="user3". The 'Splunk' way to do it is to collect all the data in the base search, if possible. Also, subsearches have limitations that a base search does not. Hi Splunk experts, I have a search that joins the results from two source types based on a common field: sourcetype'userActivity' earliest-1hh join typeinner userID search sourcertype'userAccount' fields userID, userType stats sum (activit圜ost) by userType. So your search |inputlookup "report.csv" |fields user Sometimes subsearch is the only way to solve a problem, but it is usually not the most efficient way. Remember that the subsearch is executed _before_ the main search and as a results renders a string which is substituted into the main search. The output of the subsearch is appended to the main search before execution continues. In Splunk, a subsearch is identified by square brackets and executes first. ``` Join the two different event types from above to try to piece together the whole story.If you simply want to use externally sourced lookup to generate a set of conditions for your search, you can just use a subsearch. Because I have both a source and destination IP, and it is not readily apparent which one is the blacklisted IP. I suspect you're wanting to read the CSV and use the list of admin names to filter data in an index.

(event_simpleName = "AssociateIndicator" PatternId IN ("1","2","3")) ``` Subsearch with our actual logic to get aid + process pairs + PatternId ``` We sub-search for our suspect aid + TargetProcessId combination and use them to look for the associated ProcessRollup2 events.```Įvent_simpleName IN (ProcessRollup2,SyntheticProcessRollup2) If, on the other hand, the corresponding column in lookup is eMail, for example, do. If your CSV contains a column called primaryWorkEmail, it is as simple as. ```Join AssociateIndicator events to the process and command that did them. (You can do this via Splunk Web.) The search command for matching is lookup. ``` Identied PatternIds via | inputlookup detect_patterns.csv where description="mything ``` I will leave the exercise to you to translate this into your own SIEM. It is needed to filter users logs to specified time range. user filering is okay ( as in your first query ) but can't work with the two added starttime and endtime filed even it is added in OUTPUT part. This search is designed to work with Crowdstrike FDR data ingested into Splunk. The 'user' field is a common used filed in csv and the indexed data, so It is like an inner join.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed